Our latest posts

Arts & Entertainement

After ‘Barbie’, Margot Robbie Is Preparing A Movie Based On ‘The Sims’

Society Arts & Entertainement

Unveiling the Secrets Behind Brad Pitt’s Love Life: Who is Ines de Ramon?

Arts & Entertainement

After ‘Barbie’, Margot Robbie Is Preparing A Movie Based On ‘The Sims’

Riding the wave of ‘Barbie’s’ global success, Margot Robbie is now setting her sights on another pop culture icon: ‘The …

Business

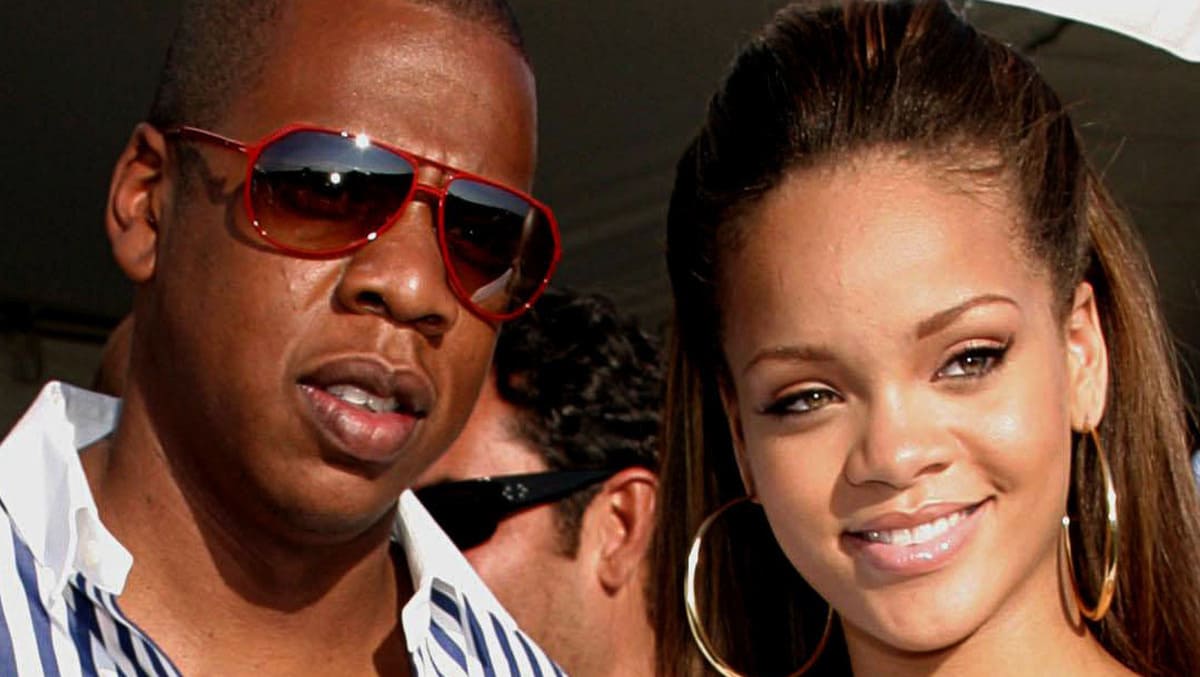

Rihanna And Jay-Z: Forbes Reveals The Amount Of Their Fortune

In the galaxy of stars that twinkle in the financial firmament, two names shine particularly …

Top posts

Arts & Entertainement, Business

Exploring the impressive net worth of singer and actress Ariana Grande

Arts & Entertainement, News

Celine Dion: How Much the Singer Is Worth and How She Makes Her Money

Arts & Entertainement, Business

Inside Mariah Carey’s Sprawling Empire: A Look at Her Net Worth in 2023

Our files

End of a Love Story: Why Golden Bachelor’s Gerry Turner & Theresa Nist Are Divorcing !

The Legendary Romance of Gerry Turner and Theresa Nist Their love story started as a …

Can Sam Bankman-Fried escape his 25-year sentence ? Shocking appeal details inside

In recent years, Sam Bankman-Fried has made headlines due to his involvement in a high-profile …

Coinbase and MicroStrategy Stock Prices Soar as Bitcoin Surges Prior to Halving Event

The cryptocurrency market is often a whirlwind of activity, with prices swinging with an energy …

Britney Spears Dating Ben Affleck? Britney Spears Claims To Have Had An Affair With The Actor

In the ever-twirling whirlwind of celebrity news, the latest breeze brings whispers of an unexpected …

BlackRock Launches a PEA-Eligible MSCI World ETF: “There is a Real Demand for These Products”

The world’s largest asset management firm, BlackRock, has recently launched a new exchange-traded fund (ETF) …

Uncovering the Mystery of Satoshi Nakamoto, Bitcoin’s Enigmatic Creator

In the world of cryptocurrencies, there is one name that stands above all others: Satoshi …

A Personal Reflection on the Impact of the September 11 Attacks

Memories from a New Yorker’s Perspective As a lifelong resident of New York City, the …

Discovering the Secrets of England’s Most Famous Family in “The Royal House of Windsor”

A Century of The Windsors: Glamour and Scandal To commemorate the 100th year of the …